We report all the standard metrics: widget shown, widget visible, widget click, and their associated derived metrics: CTR, VCTR, and visibility, for those cases where the recommendation widget appeared on a page that the user arrived at by clicking on the same recommendation widget.

Simple example with two widgets

For instance, suppose your website has two widgets: bottom-widget and right-rail. A user clicks on bottom-widget and arrives at a new page. On that page, all events associated with bottom-widget (widget shown, widget visible, widget click) will be respectively counted in the shown after click, visible after click, and click after click counts for bottom-widget. The events associated with the right-rail will not be counted as metrics after click.

The reasons for considering these metrics

There are two main advantages:

- It measures our performance among engaged users who have definitely noticed the recommendation area: One of the main problems with interpreting metrics such as CTR is that we don't know what fraction of users actually even noticed the recommendation area. When we look at metrics after click, we are restricting to a subset of the users who have given a clear indication that they are interested in the recommendations. The performance of our recommendations on this engaged subset of users provides a different way of measuring our performance.

- It is more robust, and less subject to day-to-day fluctuations that could arise as a result of variation in traffic quality: Let's say that one day, an article of yours goes viral on Reddit and gets a lot of shallow traffic. The CTR will probably go down as the shallow "drive-by" traffic isn't interested in staying around on the site. On the other hand, CTR after click is likely to remain stable, since the users who do click are probably just as likely to stay around.

Two reasons for data being unavailable or zero, plus a data size issue

If you see zero values or no data for the metrics after click, there are two possible reasons:

- We are not recording clicked item views: This could be because you are using setNoTag in your tracking implementation to strip off URL tags, or you are doing a URL rewrite or redirection after click. We are therefore unable to identify, for a given pageview, whether it arose as a result of a click. Note that if this is the case, you will also not be able to get 10-second and 3-minute clicked item views.

- We aren't showing the recommendation widget on the pages for the items we are recommending: For instance, for a homepage recommendation widget, users who click on an item in the widget won't see the widget again on the page they arrive at. So all the "after click" metrics for this widget will be zero.

Another note: If your overall traffic level or your CTR on the widget is low, you may get too few "after click" events for the metrics to be statistically robust. For instance, if your widget is shown 10,000 times a day and gets a 1% CTR, you are effectively operating with 100 "shown after click" events. With such a small number, the CTR after click won't be statistically robust and you won't be able to glean much meaning from it. You can address this problem by grouping data over longer time periods (e.g., viewing at a weekly instead of a daily granularity).

Comparing these values with the overall values

As a general rule, here is how the counts compare (unless the data is unavailable or zero for reasons discussed above):

click after click < visible after click < shown after click ~ widget click < widget visible < widget shown

More interesting are the ratios of the numbers. Usually, the CTR after click is much larger than the overall CTR. Similarly for VCTR and visibility. Essentially, people who have clicked once are much more likely to send further signals of engagement with the recommendations.

| Metric after click | Typical range | Associated overall metric | Typical value of ratio |

|---|---|---|---|

| CTR After Click | 5% to 50% | CTR | CTR After Click is between 2 and 20 times the CTR |

| VCTR After Click | 6% to 60% | VCTR | VCTR After Click is between 1.5 and 10 times the VCTR |

| Visibility After Click | 30% to 100% | Visibility | Visibility After Click is between 1 and 3 times the visibility |

Examples

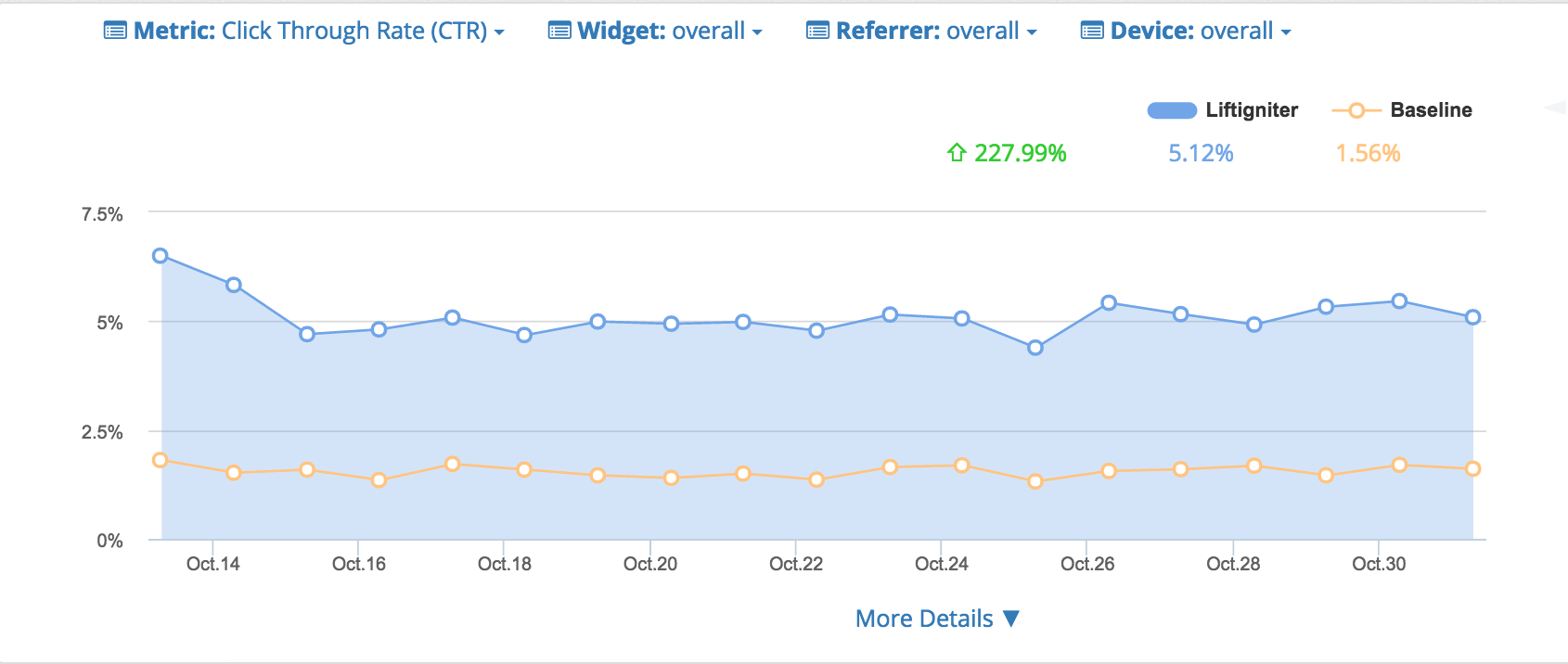

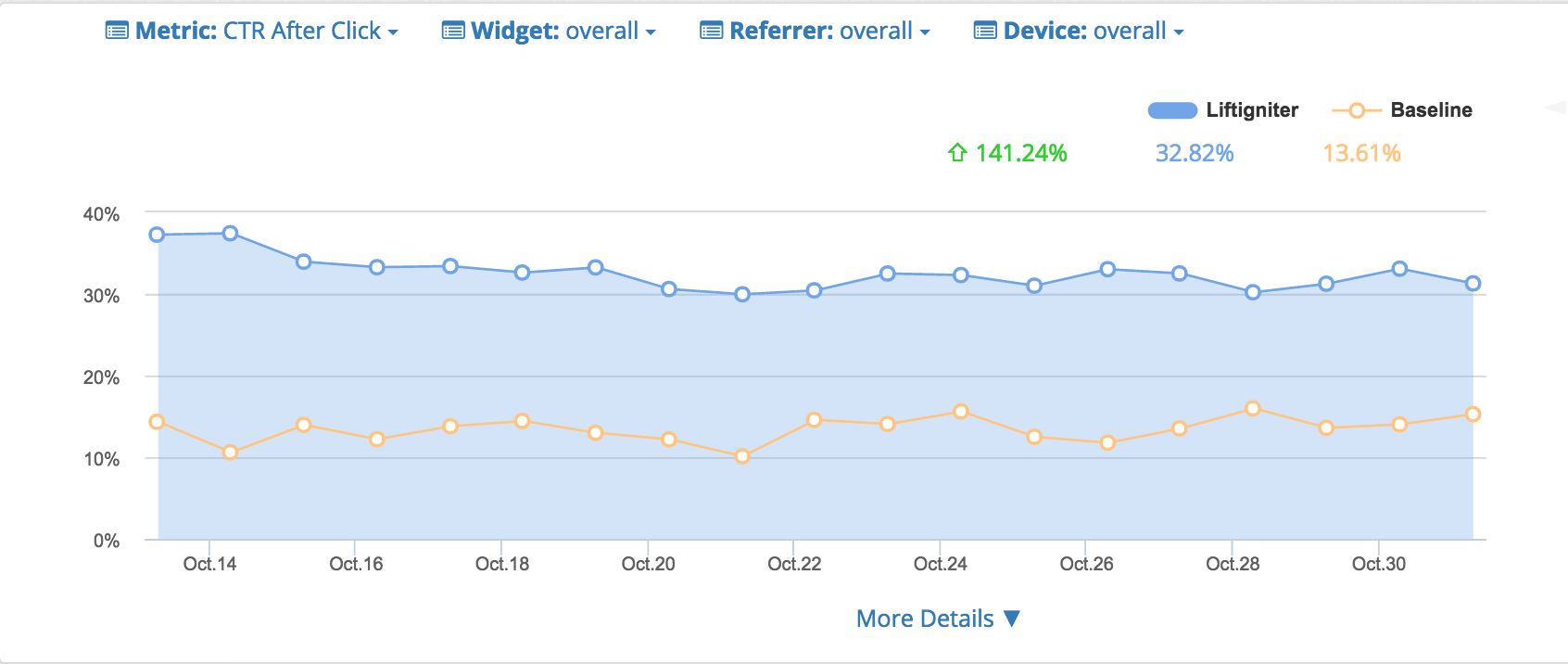

Here's a comparison of CTR with CTR after click. The CTR after click for LiftIgniter of 32.82% is over 6 times the CTR for LI of 5.12%. Data is from the LiftIgniter Analytics Panel.

Overall CTR numbers

CTR after click numbers

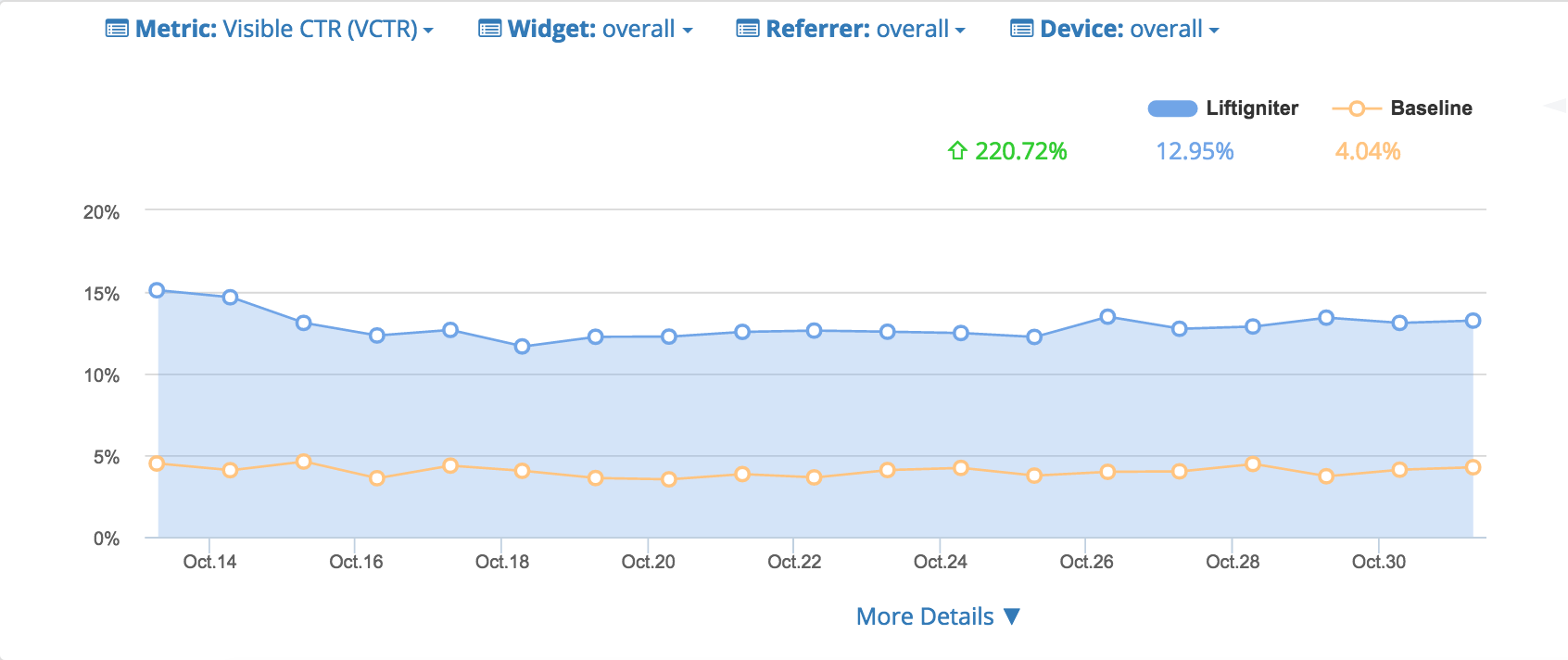

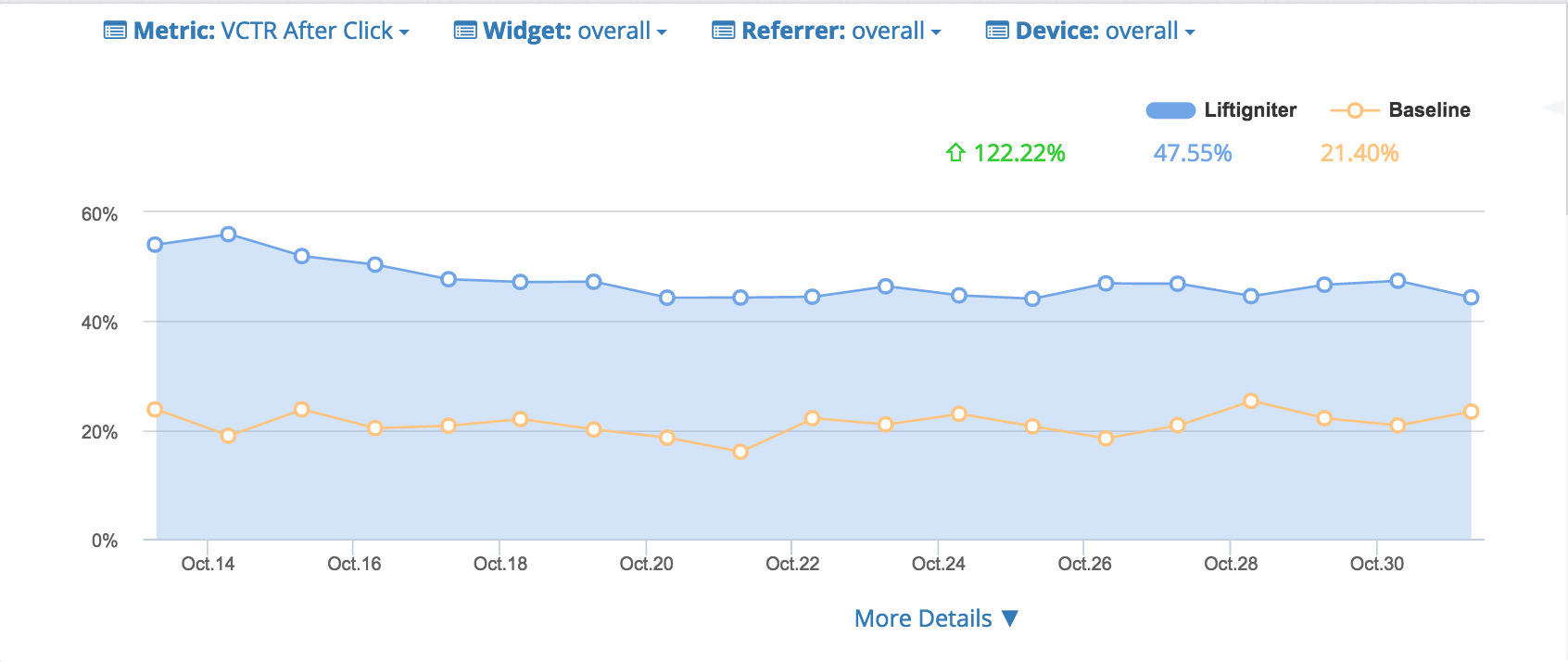

Here are the corresponding VCTR numbers.

Overall VCTR numbers

VCTR after click numbers

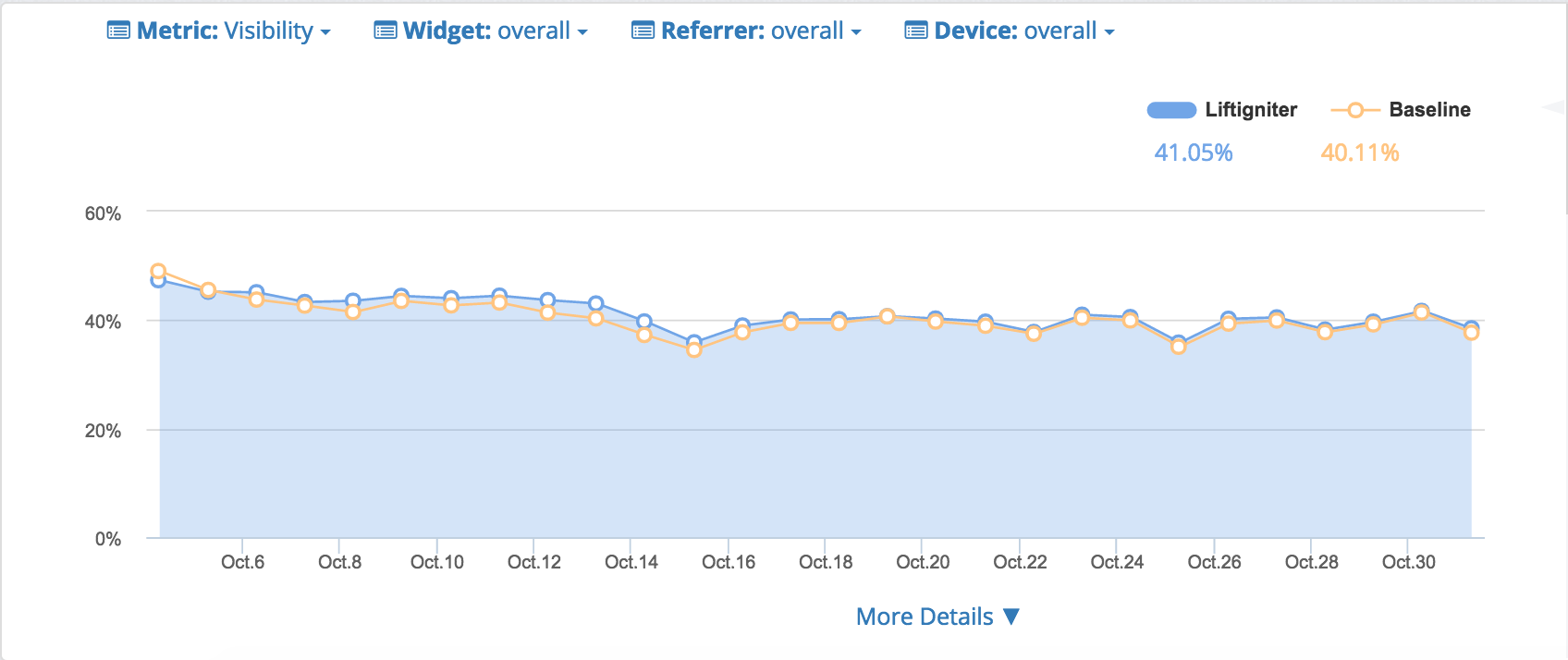

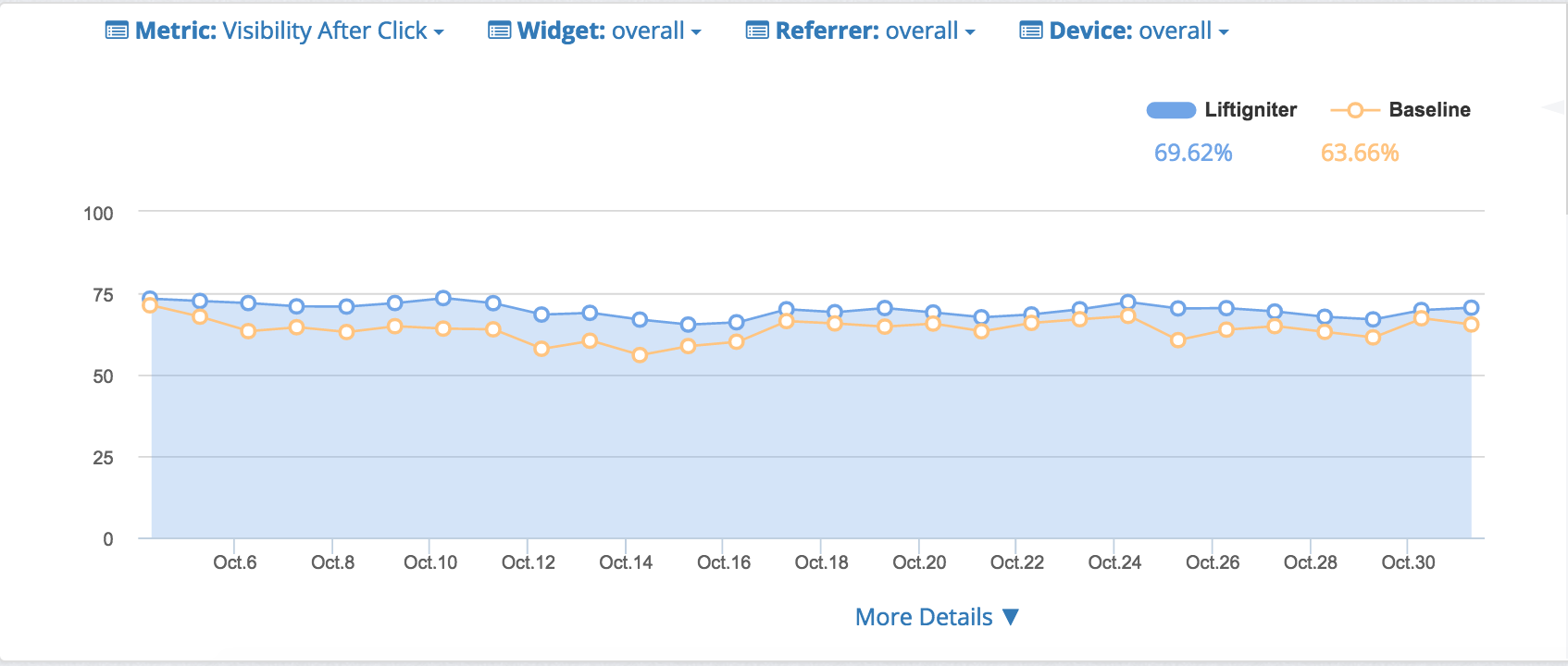

Here are similar numbers for visibility.

Overall visibility numbers

Visibility after click numbers