This is a beta feature in the LiftIgniter Lab that allows for some testing and debugging of model query results. It does not offer any functionality beyond what can already be accessed through the model query API, but it makes it easier to view the results if you are using standard field names.

The panel can be accessed at https://lab.liftigniter.com/modelQuery if you are logged in.

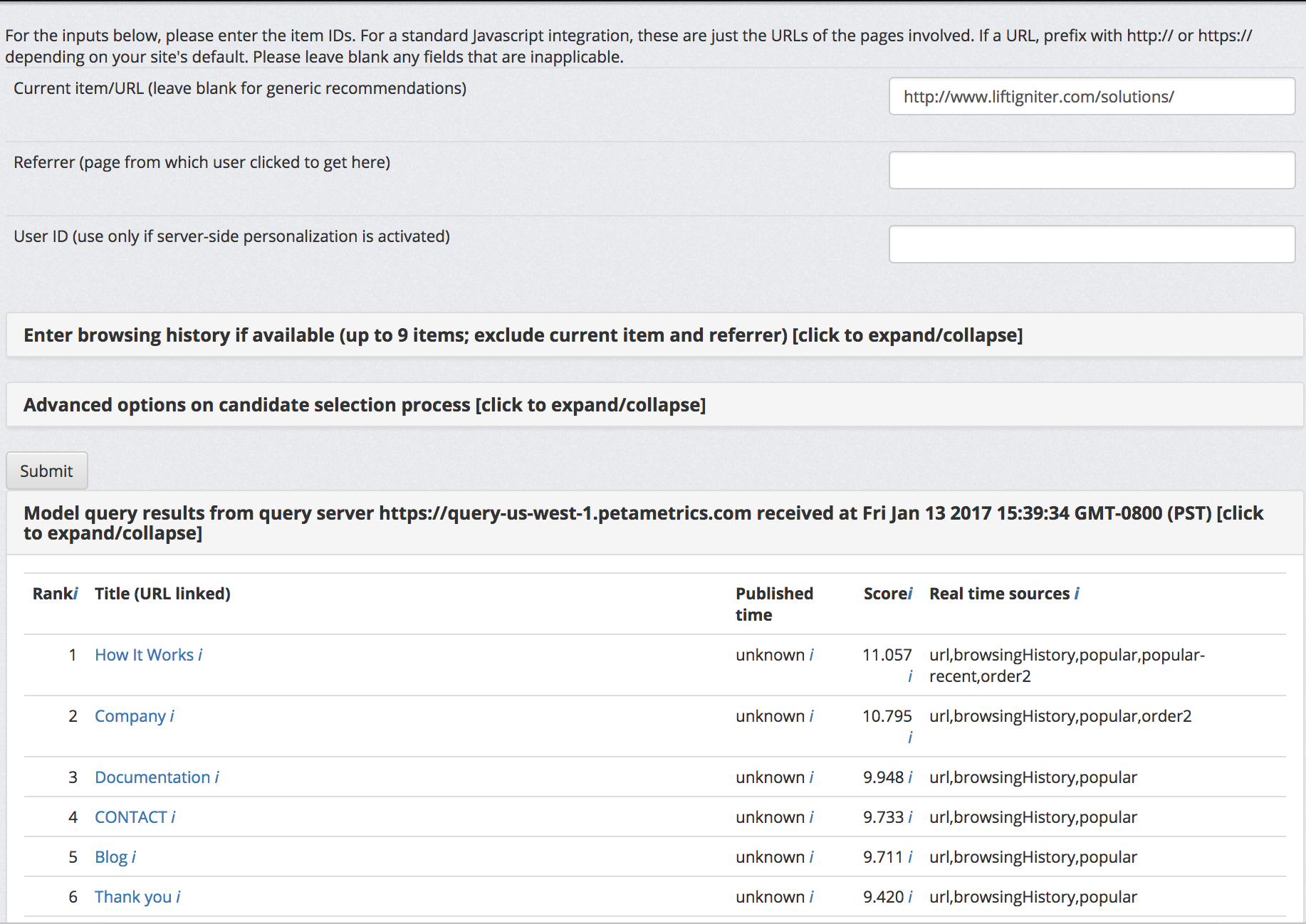

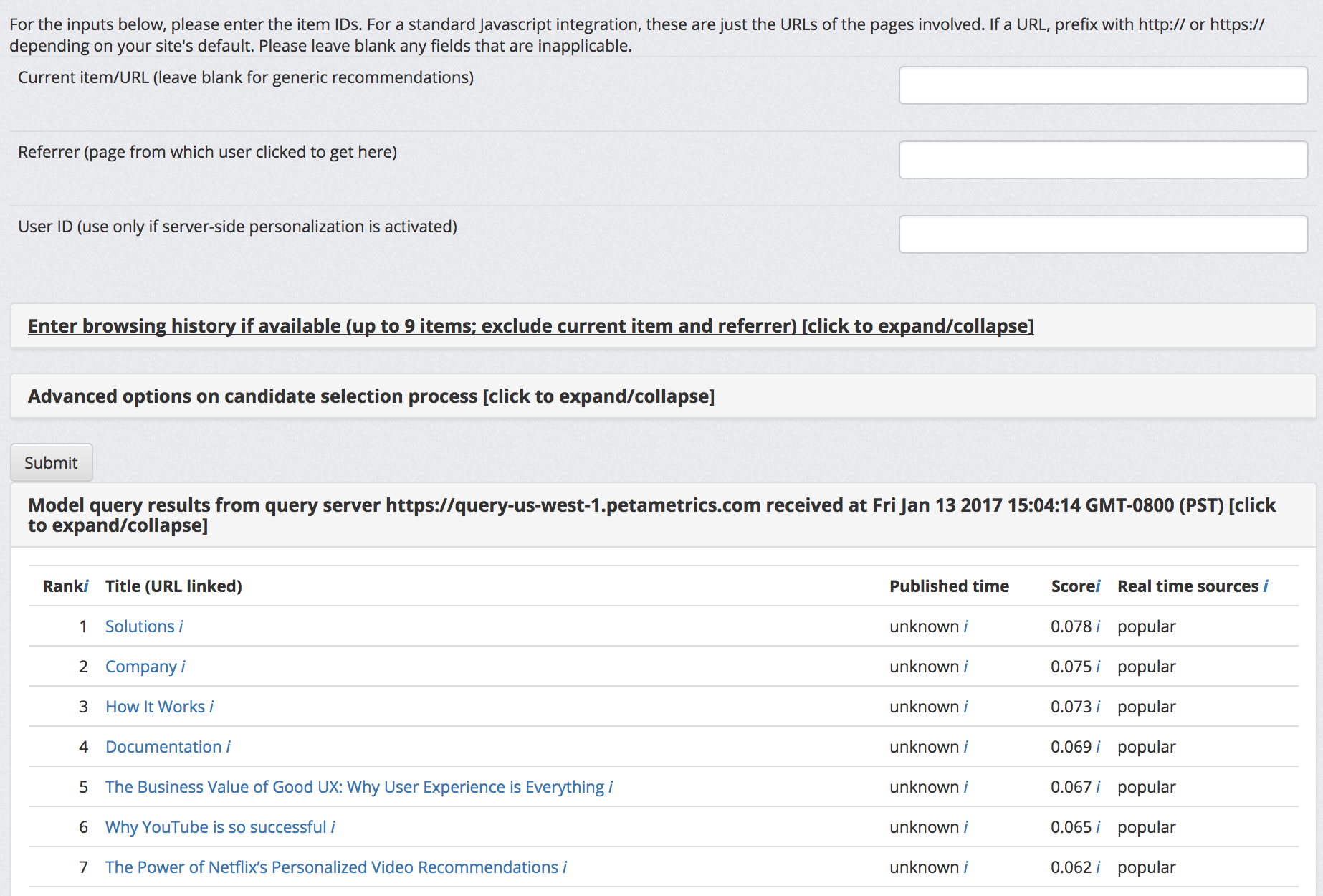

Empty model query for LiftIgniter

When you load the page, an empty query is made to LiftIgniter's server (query.petametrics.com) from the lab server. The response returned is rendered on the frontend as a table as shown in the screenshot above. Note that the table is collapsed by default so you will need to expand it.

Interpreting the query response

We display only a fixed set of fields in this interface (unlike our API, where we can return an arbitrary set of requested fields). This is to provide a predictable user experience that addresses most basic debugging use cases. The fields are as follows:

- Rank, the first column, corresponds to the requested field called "rank" and is the rank of the recommended item.

- Title (URL linked) prints the title (field "title") with a hyperlink to the URL (field "url"). If you are using an ID (field: "id") different from the URL, we will specify the ID parenthetically). The values of fields called "description" and "sponsored" are available if you hover over the information icon (i) after the title.

- Published time is the published_time field, if you have sent it to us in ISO 8601 format.

- Score is the field called adjustedScore (if available in the query response) or rawScore (if no adjustedScore is available). If you hover over the information icon i, you will see score diagnostics that provide more insight into how the scoring was done (field "scoreDiagnostics"). These diagnostics provide partial insight into how we are scoring queries, though not all the details of the scoring mechanism are obvious from this.

- Real time sources corresponds to the field "realTimeSources" (that you can specify in "arrayRequestFields"). It lists the different real-time sources that were used to generate the item as a candidate. If the candidate did not come from real-time sources, this field will say "N/A". If there are multiple real-time sources, they will be listed with comma separators.

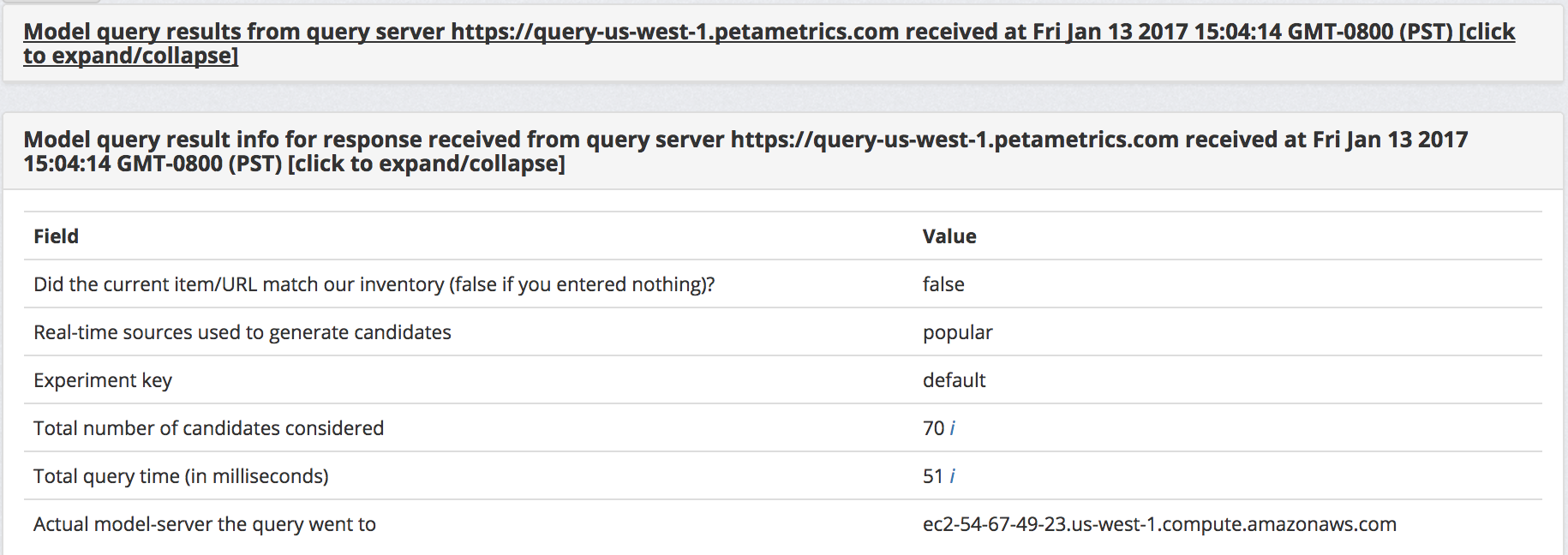

Query info below the response

Query info for empty model query to LiftIgniter

Below the query response, you will see a section giving information on the model query result. You can access this query information by passing in "getQueryInfo" : true into your model query.

- "Did the current item/URL match our inventory (false if you entered nothing)?" corresponds to the field matchedInventory.

- "Real-time sources used to generate candidates" corresponds to realTimeSources. It is the union of the real-time sources shown for each candidate.

- "Experiment key" corresponds to experimentKey. It is an internal key used in our system in cases that we are segmenting traffic based on different experiments. In most cases, it will show "default".

- "Total number of candidates considered" corresponds to the field numCandidates. If you hover on the information icon to the right, you can get more information on the number of candidates at various stages of the query processing pipeline.

- "Total query time (in milliseconds)" corresponds to the field queryTime. If you hover on the information icon to the right, you will get information on cumulative time up to various stages of query processing.

- "Actual model-server the query went to" is the DNS name of the machine the query went to. This isn't directly relevant to you (as these servers are not directly accessible) but can be useful to include in debugging information you send to us if there are issues.

Query parameters

The interface does not give you full freedom to specify arbitrary query parameters. However, you can specify a few query parameters. After editing the parameters, you should click Submit again. When the results refresh, you will see the timestamp right above the results change to indicate the time of the most recent refresh.

Some additional parameters can be specified by expanding the sections for "Enter browsing history" and "Advanced options". You can learn about these options by looking up the documentation for the Model Query API.